“Instagram Helped Kill My Daughter”: Censorship Tendencies in Social Media

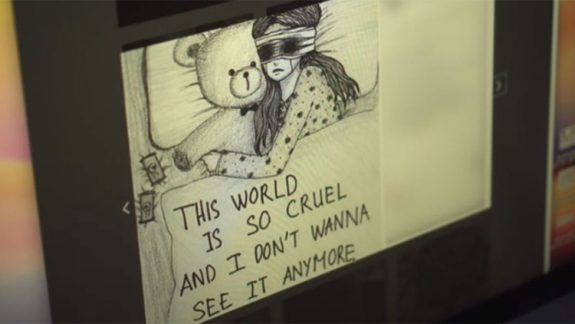

It is all a rather sorry tale. Molly Russell, another teenager gorged on social media content, sharing and darkly revelling, took her own life in 2017 supposedly after viewing what the BBC described as “disturbing content about suicide on social media.” Causation is presumed, and the platform hosting the content is saddled with blame.

Molly’s father was not so much seeking answers as attributing culpability. Instagram, claimed Ian Russell, “helped kill my daughter.” He was also spoiling to challenge other platforms: “Pininterest has a huge amount to answer for.” These platforms do, but not in quite the same way suggested by the aggrieved father.

The political classes were also quick to jump the gun. Here was a chance to score a few moral points as a distraction from the messiness of Brexit negotiations. UK Health Secretary Matt Hancock was in combative mood on the Andrew Marr show: “If we think they need to do things they are refusing to do, then we can and we must legislate.” Material dealing with self-harm and suicide would have to be purged. As has become popular in this instance, the purging element would have to come from technology platforms themselves, helped along by the kindly legislators.

Any time the censor steps in as defender of morality, safety and whatever tawdry assertions of social control, citizens should be alarmed. Such attitudes are precisely the sorts of things that empty libraries and lead to the burning of books, even if they host the nasty and the unfortunate. Content deemed undesirable must be removed; offensive content must be expunged to make us safe. The alarming thing there here is that compelling the tech behemoths to undertake such a task has the effect of granting them even more powers of social control than before. Don’t they exert enough control as it is?

While social media giants can be accused, on a certain level, of faux humanitarianism and their own variant of sublimated sociopathic control (surveillance capitalism is alive and well), they are merely being hectored for the logical consequence of sharing information and content. This is set to become more concentrated, with Facebook, as Zak Doffman writes, planning to integrate Instagram and WhatsApp further to enable users “across all three platforms to share messages and information more easily”. Given Facebook’s insatiable quest for advertising revenue, Instagram is being tasked with being the dominant force behind it.

The onus on production and exchange is on customers: the customers supply the material, and spectacle. They are the users and the exploited. This, in turn, enables the social media tech groups to monetise data, trading it, exploiting it and tanking privacy measures in the process. The social media junkie is a modern, unreflective drone.

Molly Russell

In doing so, an illusion of independent thinking is created, where debates can supposedly be had, and ideas formed. The grand peripatetic walk can be pursued. Often, the opposite takes place: groups assemble along lines of similar thought; material of like vein is bounced around under the impression it advances discussion when it merely provides filling for a cork-lined room or chamber of near-identical thinking. All of this is assisted by the algorithmic functions performed by the social media entities, all in the name of making the “experience” you have a richer one. Far be it in their interest to make sure you juggle two contradictory ideas at the same time.

Instagram’s own “Community Guidelines” have the aim of fostering and protecting “this amazing community” of users. It suggests that photos and videos that are shared should only be done by those with a right to. Featured photos and videos should be directed towards “a diverse audience”. A reminder that the tech giant is already keen on promoting a degree of control is evident in restrictions on nudity – a point that landed the platform in some hot water last year. “This includes photos, videos, and some digitally-created content that show sexual intercourse, genitals, and close-ups of fully-nude buttocks.” That’s many an art period banished from viewing and discussion.

The suicide fraternity is evidently wide enough to garner interest, even if the cult of self-harm takes much ethical punishment from the safety lobby. Material is still shared. Self-harm advisories are distributed through the appropriate channels.

Instagram’s response to this is to try to nudge such individuals towards content and groups that might just as equally sport reassuring materials to discourage suicide and self-harm. Facebook, through its recently appointed Vice-President of Global Affairs, Sir Nick Clegg, was even happy to point out that the company had prevented suicides: “Over the last year, 3,500 people who were displaying behaviour liable to lead to the taking of their own lives on Facebook were saved by early responders being pointed to those and people and intervening at the right time.”

This is all to the good, but such views fail in not understanding that social media is not used or engaged in to change ideas so much as create communities who only worship a select few. The tyranny of the algorithm is a hard one to dislodge.

In engaging such content, we are dealing with narcotised dragoons of users, the unquestioning creating content for the unchallenged. That might prove to be the greatest social crime of all, the paradox of nipping curiosity rather than nurturing it, but instead of dealing with the complexities of information from this perspective, governments are going to make technology companies the chief censors. It might well be argued that enough of that is already taking place as it is, this being the age of deplatforming. Whether it be a government or a social media giant, the same shoddy principle is the same: others know better than you do, and you should be protected from yourself.

Like what we do at The AIMN?

You’ll like it even more knowing that your donation will help us to keep up the good fight.

Chuck in a few bucks and see just how far it goes!

11 comments

Login here Register here-

Keitha Granville

-

Kaye Lee

-

paul walter

-

Diannaart

-

Adrianne Haddow

-

paul walter

-

James Lawrie

-

paul walter

-

Adrianne Haddow

-

Adrianne Haddow

-

paul walter

Return to home pageBullying in any form – cyber or irl – is never ok. It is certainly easier for young tech savvy people to be as relentlessly nasty as they like with a keyboard or a screen to hide in. But parents must step up to the plate here and be more vigilant than ever.

Join your youngsters at their own game.

No fancy phones at school, leave them in the office all day or better still they just have a simple phone that has no internet capacity. No devices in bedrooms, always in the living areas of the house where you can see what they see. Talk to your children often about the dangers of cyber bullies, so that they know to tell you immediately if it is happening to them. If you discover that your child IS the perpetrator, out them immediately to their school and to the parents of the bullied child.

Children/young teens are not in charge folks, that is your responsibility. Make sure you take it. Be the parent, not the best friend.

Keitha,

A close friend of mine is the principal at a challenging high school in the western suburbs of Sydney. Often when she deals with bullying and conflict between kids, she is hampered by parents getting involved in the dispute with facebook wars which sometimes go further.

One thing she did with the kids was to bring two protagonists into her office and made them read the texts and online messages they had sent out loud to the other person. They really didn’t want to do it. It made them realise how hurtful they had been when they couldn’t bring themselves to say the same things directly to the other person in front of someone else. The kids quickly agreed they wanted it to stop, but getting the others who had piled onto the fight on board was more challenging.

No doubt about it, mainstream media and press have launched a moralistic, concerted campaign against social media but it is because they are losing market share to the internet and because they are subject to scrutiny as people shop around to get different takes on a given story, identifying where information has been omitted. The worst example is Murdoch of course, who picked up their bat and ball and went home sulking Crtmanlike to hide behind their self alibiing wall, as already low credibility plummeted exponentially and too many lies were exposed.

They could be losing revenue, since sponsors realise that stories can no longer be slanted without detection and this credibility issue arises.

Also, Government censorship has meant broadsheet MSM has had to trawl down market, since it is quarantined from its natural sources of stories concerning a corrupt real world,

so soap opera becomes the norm.

Kaye Lee

Could AIM set up a face to face?

We could film it … might go viral, cover the costs of getting protagonists into the same place and earn $’s.

Thinking outside the box here.

🤓

The downside of censoring social media platforms is handing control of information sharing to a particular body/institution, making the recognition of, and debunking of propaganda, pushed by various governments and their MSM flunkies, an impossible task.

As Binoy states

‘Any time the censor steps in as defender of morality, safety and whatever tawdry assertions of social control, citizens should be alarmed. Such attitudes are precisely the sorts of things that empty libraries and lead to the burning of books, even if they host the nasty and the unfortunate. Content deemed undesirable must be removed; offensive content must be expunged to make us safe.’

We all glean our information from a variety of sources, and if we read and think critically, use this information in forming our beliefs and opinions.

Would we be happy with Dutton, Morrison or Kelly deciding what we can read and who we can share our opinions with?

Even the Facebook censors have trouble deciding what a cultural image is, as opposed to morally questionable images. ( Images of bare-breasted Australian indigenous dancers were deemed not to meet Facebook standards yet bare-breasted, white girls riding bicycles with dildoes strapped to the handlebars were acceptable)

Paul Walter nails it. The campaign against social media is an attempt to take control of the information which reaches us, and enables ‘them’ to avoid scrutiny and transparency.

While Molly’s suicide is extremely sad, one questions why a child would be visiting suicide sites in the first place.

We, as parents, need to be constantly vigilant with our children’s on-line and off-line lives.

Thanks again, Adrienne Haddow.

Sometimes I wonder if I am just talking to myself.

Hopefully adding to the conversation, I wonder if the internet is censorable, if parents should be blamed if parental censorship is just not enforceable and once again (let alone considerations equating to helicopter authority), does it comes down to who censors when laws like the last DR ones re government surveillance are passed, who does the censoring and at what costs to others, including transgressing of legitimate privacy for members of the community for ideological reasons. Also, businesses established on privacy as their main selling point having the rug pulled from under them.

Kids know about the Dark Web and are frequent users and it is this reflexive constraint that sends them there.

As it was once said ‘educate, don’t legislate’

Reminds me of why many young people smoked pot back in the hippy days.

No need for thanks, Paul.

We seem to have the same healthy distrust of the powers our governments are taking upon themselves, in order to protect us from ourselves, and others who tell a different story to what they are spruiking.

I fear the take-over of our world by corporations, and their supporters in local and federal governments.

Just when we thought we’d left feudalism behind, up pops another group of self- styled elites who think they know better than us about how we should live our lives. It usually means more profit and power for them.

I don’t always comment but I do read, and agree with many of your postings.

No need for thanks, Paul.

We seem to have the same healthy distrust of the powers our governments are taking upon themselves, in order to protect us from ourselves, and others who tell a different story to what they are spruiking.

I fear the take-over of our world by corporations, and their supporters in local and federal governments.

Just when we thought we’d left feudalism behind, up pops another group of self- styled elites who think they know better than us about how we should live our lives. It usually means more profit for them.

I don’t always comment but I do read, and agree with many of your postings.

Do you notice Adrianne, that the more you keep informed, the more the reasons crystallise as to why people need to keep an eye on what goes on in the world around them and a little less on their own precious little inner microverses?

I Wonder if the same thing that happened to indigenes two hundred years ago is not beginning to repeat itself and nothing would surprise me less in the Comfy Well-Fed and Dozy Country.